Human tissue is intricate, complex and, of course, three dimensional.

But the thin slices of tissue that pathologists most often use to diagnose disease are two dimensional, offering only a limited glimpse at the tissue's true complexity.

There is a growing push in the field of pathology toward examining tissue in its three-dimensional form.

But 3D pathology datasets can contain hundreds of times more data than their 2D counterparts, making manual examination infeasi…

After driving our EV (& 32 yo manual ICE car) for the past 6months, here's some unsolicited advice to auto mechanics: if you're not already actively train(ed|ing) to work on EVs & auto electronics, down tools & run - don't walk - to enroll in a course. The market for EV mechanics is burgeoning somethin' crazy & that for ICEs is contracting. You can be in the right place at the right time.

Deep Learning-Based Auto-Segmentation of Planning Target Volume for Total Marrow and Lymph Node Irradiation

Ricardo Coimbra Brioso, Damiano Dei, Nicola Lambri, Daniele Loiacono, Pietro Mancosu, Marta Scorsetti

https://arxiv.org/abs/2402.06494

We are having some fun now!

This the same type of computer and manual I first used to learn programming in 1981. The Wayback Machine is set to "80s nerd."

#trs80 #radioshack #retrocomputing

I passed on a chance to buy a VisionPRO at a discount. I mean HUGE discount.

I know it's programmable and everything, but it didn't seem like the right fit. Passing it along in case someone else is interested.

#VisionPRO #goodwill

I don’t understand how Teslas are still legal when people keep dying because they can’t open the doors when power is lost.

(You can open them, but it’s a non-obvious multi-step process that requires reading the manual.)

UDCR: Unsupervised Aortic DSA/CTA Rigid Registration Using Deep Reinforcement Learning and Overlap Degree Calculation

Wentao Liu, Bowen Liang, Weijin Xu, Tong Tian, Qingsheng Lu, Xipeng Pan, Haoyuan Li, Siyu Tian, Huihua Yang, Ruisheng Su

https://arxiv.org/abs/2403.05753

A big public thanks to @… for the Casual #emacs package (available on MELPA), which enables "casual" use of Emacs Calc.

In case you don't know, Emacs Calc is an advanced calculator and

Do I know any tech writers who would be interested doing some contract work? (The specific project I have in mind is the user guide / reference manual for OmniGraffle 8.)

Feel free to reach out privately, here or via email (kc@omnigroup.com).

I put in an open records request for the MUNI fare inspector training manual, and they only gave me 6 out of what I now know to be 100 pages. They also redacted an item in the May ops order.

Tidbit: The latest shift starts at 12:30pm, so there is officially no one on duty after ~8pm.

https://

@… Hey! Are you into https://www.php.net/manual/de/function.hrtime.php? I thought maybe you have an idea - but please be careful, the rab…

UDCR: Unsupervised Aortic DSA/CTA Rigid Registration Using Deep Reinforcement Learning and Overlap Degree Calculation

Wentao Liu, Bowen Liang, Weijin Xu, Tong Tian, Qingsheng Lu, Xipeng Pan, Haoyuan Li, Siyu Tian, Huihua Yang, Ruisheng Su

https://arxiv.org/abs/2403.05753

Now would be the time to start HeapOverflow and switch from stack to heap based resource allocation. Still without automatic garbage collection but purely manual 😃

Knowledge-aware Alert Aggregation in Large-scale Cloud Systems: a Hybrid Approach

Jinxi Kuang, Jinyang Liu, Junjie Huang, Renyi Zhong, Jiazhen Gu, Lan Yu, Rui Tan, Zengyin Yang, Michael R. Lyu

https://arxiv.org/abs/2403.06485

I put in an open records request for the MUNI fare inspector training manual, and they only gave me 6 out of what I now know to be 100 pages. They also redacted an item in the May ops order.

Tidbit: The latest shift starts at 12:30pm, so there is officially no one on duty after ~8pm.

https://

@… @… @… ...or the driver's manual

If you’re working on Kitten¹ from source, please clone a fresh copy.

I just rewrote history to reduce the repository size (correctly this time, including all references from branches, tags, etc.).

The good news is that – contrary to what the Codeberg interface is currently showing the size to be (176MB) – the repository is only about 5MB now so it should only take a couple of seconds to clone.

Related issue:

Sources: Intel is partnering with 14 Japanese companies to develop tech by 2028 to automate the largely manual "back-end" chipmaking processes like packaging (Nikkei Asia)

https://t.co/LNwesJ4YrD

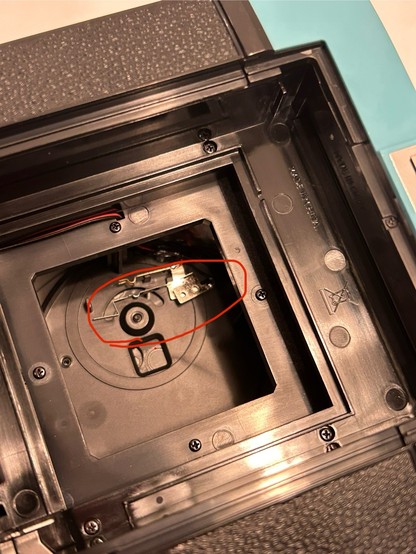

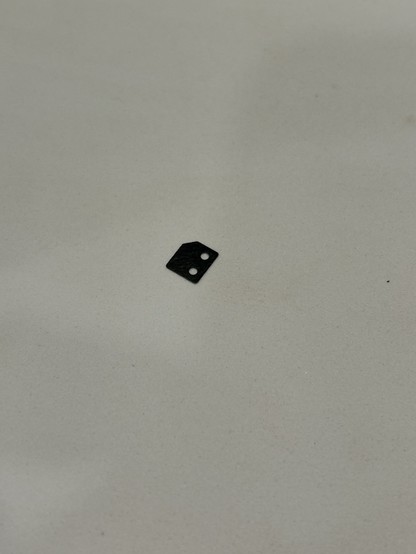

I’ve recently become fascinated with instant photography.

I ordered the most basic, manual camera to learn with: a #lomography Diana Instant Square. It came from the US so I had a surprise $87 CAD import charge (😡).

On my first film load, a little plastic bit tumbled out of the film door and I’m 99% it’s DoA - it never ejects the dark slide. I’ve contacted lomography but no respon…

infrastructure mgmt methods compared as a thermostat:

- managed service: email butler to change thermostat

- SaaS product: the office thermostats are fake

- console/manual: you light the furnace yourself

- terraform/bash (the CSV/JSON of programming): ??? the robotic arm manipulator at a nuclear facility tries to set a thermostat, but it's clunky and brittle

- ansible/kubernetes: you order a medium rare thermostat. it tries to hit that temperature but might not be a good cook. you speak secret incantations.

- python/typescript/golang: you have much more precise chefs.

- literal service API thing reconciling state: you've written a thermostat (k8s lets you write your own drivers)

the terraform version of this is... one and done "infrastructure as code"

the literal reconciler thermostat thing... is that... "infrastructure as daemons"? "infrastructure as... APIs"? Infra as services?

I swear @krisnova@hachyderm.io (RIP <3) had a word for it.

#infrastructure #cloudarchitecture #krisnova

found!: "Infrastructure as Software"

Never mind @…, I found my solution!

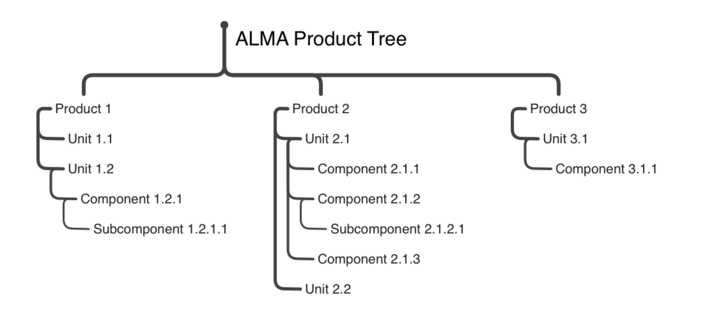

I was able to massage my notional hierarchy into MindMap by starting from the Greyscale style, and then using the manual layout and the compact style, using the top-down approach, and styling things like I wanted.

Later, I used Format > Extract Theme to create my own version of the sytle for later application.<…

Microlensing optical depth and event rate toward the Large Magellanic Cloud based on 20 years of OGLE observations

P. Mroz, A. Udalski, M. K. Szymanski, M. Kapusta, I. Soszynski, L. Wyrzykowski, P. Pietrukowicz, S. Kozlowski, R. Poleski, J. Skowron, D. Skowron, K. Ulaczyk, M. Gromadzki, K. Rybicki, P. Iwanek, M. Wrona, M. Ratajczak

https://arxiv.org/abs/2403.02398 https://arxiv.org/pdf/2403.02398

arXiv:2403.02398v1 Announce Type: new

Abstract: Measurements of the microlensing optical depth and event rate toward the Large Magellanic Cloud (LMC) can be used to probe the distribution and mass function of compact objects in the direction toward that galaxy - in the Milky Way disk, Milky Way dark matter halo, and the LMC itself. The previous measurements, based on small statistical samples of events, found that the optical depth is an order of magnitude smaller than that expected from the entire dark matter halo in the form of compact objects. However, these previous studies were not sensitive to long-duration events with Einstein timescales longer than 2.5-3 years, which are expected from massive ($10-100\,M_{\odot}$) and intermediate-mass ($10^2-10^5\,M_{\odot}$) black holes. Such events would have been missed by the previous studies and would not have been taken into account in calculations of the optical depth. Here, we present the analysis of nearly 20-year-long photometric monitoring of 78.7 million stars in the LMC by the Optical Gravitational Lensing Experiment (OGLE) from 2001 through 2020. We describe the observing setup, the construction of the 20-year OGLE dataset, the methods used for searching for microlensing events in the light curve data, and the calculation of the event detection efficiency. In total, we find 16 microlensing events (thirteen using an automated pipeline and three with manual searches), all of which have timescales shorter than 1 yr. We use a sample of thirteen events to measure the microlensing optical depth toward the LMC $\tau=(0.121 \pm 0.037)\times 10^{-7}$ and the event rate $\Gamma=(0.74 \pm 0.25)\times 10^{-7}\,\mathrm{yr}^{-1}\,\mathrm{star}^{-1}$. These numbers are consistent with lensing by stars in the Milky Way disk and the LMC itself, and demonstrate that massive and intermediate-mass black holes cannot comprise a significant fraction of dark matter.

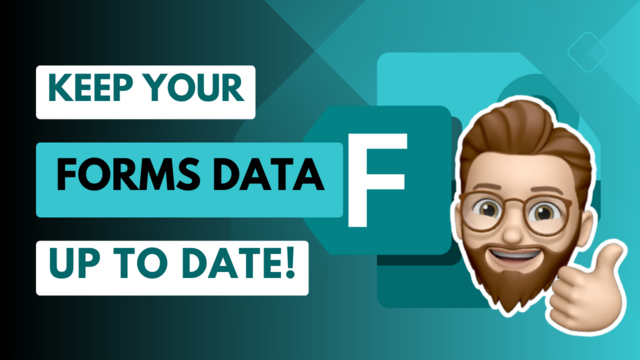

🤯 If you are a long-time user of Microsoft Forms, you have likely run into the annoyance of ending up with a new spreadsheet every time you go to download a copy of your Forms response data.

If you are tired of juggling multiple spreadsheets of survey data, then you are going to love that Microsoft Forms just rolled out an update to make your life easier! Say goodbye to manual updates, outdated data, and confusing tables. ✨

You can now have your data up to date and in sync auto…

Hybridized Convolutional Neural Networks and Long Short-Term Memory for Improved Alzheimer's Disease Diagnosis from MRI Scans

Maleka Khatun, Md Manowarul Islam, Habibur Rahman Rifat, Md. Shamim Bin Shahid, Md. Alamin Talukder, Md Ashraf Uddin

https://arxiv.org/abs/2403.05353

I'm looking for anyone who has a Pratt Burnerd lever-closing collet chuck of any kind, and/or anyone who has a manual for one. Pratt Burnerd don't seem to keep them on their web site; fair enough, this has been out of production for decades. But I'm crazy enough to have bought what is apparently one of the few D1-5 PB LC-15s ever made (because I wanted a collet chuck with similar capacity to my spindle) and I'm trying to bring it back to life.

Photos or scans of the manual for any of t…

@… @… @… ...or the driver's manual

@… @… my records request was responded to with only 6/100 pages of the manual and something redacted for May operations. They also did not mark it public in the portal

Without wishing failure on the project I did think that they'd done a Beatle when the radio signal acquisition didn't go well. But the framing of being a prototype takes the pressure off.

And I guess the line "Check manual isolation of lasers is OFF" is inserted in the launch process rubric.

#IM1

Galaxies in the Zone of Avoidance: Misclassifications using machine learning tools

P. Marchant Cort\'es, J. L. Nilo Castell\'on, M. V. Alonso, L. Baravalle, C. Villal\'on, M. A. Sgr\'o, I. V. Daza-Perilla, M. Soto, F. Milla Castro, D. Minniti, N. Masetti, C. Valotto, M. Lares

https://arxiv.org/abs/2403.03098 https://arxiv.org/pdf/2403.03098

arXiv:2403.03098v1 Announce Type: new

Abstract: Automated methods for classifying extragalactic objects in large surveys offer significant advantages compared to manual approaches in terms of efficiency and consistency. However, the existence of the Galactic disk raises additional concerns. These regions are known for high levels of interstellar extinction, star crowding, and limited data sets and studies. In this study, we explore the identification and classification of galaxies in the Zone of Avoidance (ZoA). In particular, we compare our results in the near-infrared with X-ray data. We analize the appearance of the objects classified as galaxies using machine learning by Zhang et al. (2021) in the Galactic disk and make a comparison with the visually confirmed galaxies from the VVV NIRGC (Baravalle et al. (2021). Our analysis, which includes the visual inspection of all sources catalogued as galaxies throughout the Galactic disk using machine learning techniques reveals significant differences. Only 4 galaxies were found in both the near-Infrared and X-ray data sets. Several specific regions of interest within the ZoA exhibit a high probability of being galaxies in X-ray data but closely resemble extended Galactic objects. The results indicate the difficulty of using machine learning methods for galaxy classification in the ZoA mainly due to the scarce information on galaxies behind the Galactic plane in the training set. They also stress the importance of considering specific factors that are present to improve the reliability and accuracy of future studies in this challenging region.

You can tell the database to collect stats and improve the decisions the query planner makes with the ANALYZE TABLE statement, using e.g. the histogram method. - @… #phptek

More info for MySQL:

I was talking to this guy about his job once and he said things were going great, and he had just got a raise. He told me his secret… He read the documentation. There was a huge manual at work that covered what needed to be done (rules, regulations, and such) and he said he was the only one who actually read it.

I remember this even 20 some years later, because I’ve spent the last 30 years of my career writing documentation that I’m always convinced no one reads except me.

infrastructure mgmt methods compared as a thermostat:

- managed service: email butler to change thermostat

- SaaS product: the office thermostats are fake

- console/manual: you light the furnace yourself

- terraform/bash (the CSV/JSON of programming): ??? the robotic arm manipulator at a nuclear facility tries to set a thermostat, but it's clunky and brittle

- ansible/kubernetes: you order a medium rare thermostat. it tries to hit that temperature but might not be a good cook. you speak secret incantations.

- python/typescript/golang: you have much more precise chefs.

- literal service API thing reconciling state: you've written a thermostat (k8s lets you write your own drivers)

the terraform version of this is... one and done "infrastructure as code"

the literal reconciler thermostat thing... is that... "infrastructure as daemons"? "infrastructure as... APIs"? Infra as services?

I swear @krisnova@hachyderm.io (RIP <3) had a word for it.

#infrastructure #cloudarchitecture #krisnova

found!: "Infrastructure as Software"

ELEPHANT: ExtragaLactic alErt Pipeline for Hostless AstroNomical Transients

P. J. Pessi (for the COIN collaboration), R. Durgesh (for the COIN collaboration), L. Nakazono (for the COIN collaboration), E. E. Hayes (for the COIN collaboration), R. A. P. Oliveira (for the COIN collaboration), E. E. O. Ishida (for the COIN collaboration), A. Moitinho (for the COIN collaboration), A. Krone-Martins (for the COIN collaboration), B. Moews (for the COIN collaboration), R. S. de Souza (for the C…

I believe I found a CD that’s not yet on archive.org—to the ripper, Batman

@… I’m a 90’s gamer, reading the manual is at least half the fun!

So I would have probably spent an hour looking through the docs, before I ever thought to install anything in the first place 😂

"For the pleasure that can be obtained from this part of the body is comparable to that obtained from the tip of the penis." (Pietro d’Abano, 13th century, about the clitoris)

[Source: https://www.nybooks.com/articles/2024/03/07/…

Series A, Episode 08 - Duel

BLAKE: Jenna, you'll have to fly us on manual.

JENNA: Yes, we'll need to take the impact on the lower hull.

https://blake.torpidity.net/s/108/180 📺 B7B5

Policy on account management, but no reports to help us do it? A bit of a PITA for me to manual run three reports, save the output from SDSF to a flat file, clean out the crap, download then run in XLS to identify what needs cleaning? Way too manual and error prone. If I get time I may figure out writing a program so that a standard report is generated and then the output goes to records management for the security officers to review.

How Do You Rhyme in Sign Language?

In the phonology of sign language, the parts of a signed word are:

handshape, location, palm orientation, movement, and non-manual signal.

They are called parameters. Each parameter has a number of primes.

More specifically In American sign language, the same parameter in two or more words (signs) are repeated.

The parts may be the same handshape, movement, and/or location, or combined, but the handshape

This is the stuff you gotta love the internet for: In January, I gave one of my manual lenses to a store for servicing, as it was quite wobbly between its focus helicoid & optical unit. Today I noticed that the problem started to come back and I found a 9 year old YouTube video explaining how to tighten the retaining ring on that type of lens.

With that, all it took was a 1.3 mm flathead screwdriver and 30 seconds of labor to fix it myself! 🙏 #BelieveInFilm

Image-Guided Autonomous Guidewire Navigation in Robot-Assisted Endovascular Interventions using Reinforcement Learning

Wentao Liu, Tong Tian, Weijin Xu, Bowen Liang, Qingsheng Lu, Xipeng Pan, Wenyi Zhao, Huihua Yang, Ruisheng Su

https://arxiv.org/abs/2403.05748

Assessing LLMs in Malicious Code Deobfuscation of Real-world Malware Campaigns

Constantinos Patsakis, Fran Casino, Nikolaos Lykousas

https://arxiv.org/abs/2404.19715 https://arxiv.org/pdf/2404.19715

arXiv:2404.19715v1 Announce Type: new

Abstract: The integration of large language models (LLMs) into various pipelines is increasingly widespread, effectively automating many manual tasks and often surpassing human capabilities. Cybersecurity researchers and practitioners have recognised this potential. Thus, they are actively exploring its applications, given the vast volume of heterogeneous data that requires processing to identify anomalies, potential bypasses, attacks, and fraudulent incidents. On top of this, LLMs' advanced capabilities in generating functional code, comprehending code context, and summarising its operations can also be leveraged for reverse engineering and malware deobfuscation. To this end, we delve into the deobfuscation capabilities of state-of-the-art LLMs. Beyond merely discussing a hypothetical scenario, we evaluate four LLMs with real-world malicious scripts used in the notorious Emotet malware campaign. Our results indicate that while not absolutely accurate yet, some LLMs can efficiently deobfuscate such payloads. Thus, fine-tuning LLMs for this task can be a viable potential for future AI-powered threat intelligence pipelines in the fight against obfuscated malware.

Manual aperture and shutter, auto-ISO. Control the things that matter artistically, not the the things that don’t.

#photography

Oh no, it’s over, NYPD found proof that it was trained terrorists behind the protests and showing the manual they found when raiding Columbia university

Image-Guided Autonomous Guidewire Navigation in Robot-Assisted Endovascular Interventions using Reinforcement Learning

Wentao Liu, Tong Tian, Weijin Xu, Bowen Liang, Qingsheng Lu, Xipeng Pan, Wenyi Zhao, Huihua Yang, Ruisheng Su

https://arxiv.org/abs/2403.05748

How Do You Rhyme in Sign Language?

In the phonology of sign language, the parts of a signed word are:

handshape, location, palm orientation, movement, and non-manual signal.

They are called parameters. Each parameter has a number of primes.

More specifically In American sign language, the same parameter in two or more words (signs) are repeated.

The parts may be the same handshape, movement, and/or location, or combined, but the handshape

Series D, Episode 08 - Games

SLAVE: You have achieved the new orbit with consummate skill, master.

AVON: Thank you Slave, I am releasing manual control. Watch out for any deviation in the Orbiter.

https://blake.torpidity.net/s/408/272 📺 B7B7

Back in the days computers shipped with manuals like this, can you imagine this today?

Me: Is this a haiku:

Take a look

It's in this book

Read the manual

ChatGPT 3.5:

I know GPT is stupid (and why), but still the stupidity surprises me each time I see it.

FWIW: ChatGPT 4 as used in Copilot counted the syllables correctly.

Unrelated to this post, but the verse I gave GPT is from here:

Back in the days computers shipped with manuals like this, can you imagine this today?

SpecstatOR: Speckle statistics-based iOCT Segmentation Network for Ophthalmic Surgery

Kristina Mach, Hessam Roodaki, Michael Sommersperger, Nassir Navab

https://arxiv.org/abs/2404.19481 https://arxiv.org/pdf/2404.19481

arXiv:2404.19481v1 Announce Type: new

Abstract: This paper presents an innovative approach to intraoperative Optical Coherence Tomography (iOCT) image segmentation in ophthalmic surgery, leveraging statistical analysis of speckle patterns to incorporate statistical pathology-specific prior knowledge. Our findings indicate statistically different speckle patterns within the retina and between retinal layers and surgical tools, facilitating the segmentation of previously unseen data without the necessity for manual labeling. The research involves fitting various statistical distributions to iOCT data, enabling the differentiation of different ocular structures and surgical tools. The proposed segmentation model aims to refine the statistical findings based on prior tissue understanding to leverage statistical and biological knowledge. Incorporating statistical parameters, physical analysis of light-tissue interaction, and deep learning informed by biological structures enhance segmentation accuracy, offering potential benefits to real-time applications in ophthalmic surgical procedures. The study demonstrates the adaptability and precision of using Gamma distribution parameters and the derived binary maps as sole inputs for segmentation, notably enhancing the model's inference performance on unseen data.

Autonomous Quality and Hallucination Assessment for Virtual Tissue Staining and Digital Pathology

Luzhe Huang, Yuzhu Li, Nir Pillar, Tal Keidar Haran, William Dean Wallace, Aydogan Ozcan

https://arxiv.org/abs/2404.18458 https://arxiv.org/pdf/2404.18458

arXiv:2404.18458v1 Announce Type: new

Abstract: Histopathological staining of human tissue is essential in the diagnosis of various diseases. The recent advances in virtual tissue staining technologies using AI alleviate some of the costly and tedious steps involved in the traditional histochemical staining process, permitting multiplexed rapid staining of label-free tissue without using staining reagents, while also preserving tissue. However, potential hallucinations and artifacts in these virtually stained tissue images pose concerns, especially for the clinical utility of these approaches. Quality assessment of histology images is generally performed by human experts, which can be subjective and depends on the training level of the expert. Here, we present an autonomous quality and hallucination assessment method (termed AQuA), mainly designed for virtual tissue staining, while also being applicable to histochemical staining. AQuA achieves 99.8% accuracy when detecting acceptable and unacceptable virtually stained tissue images without access to ground truth, also presenting an agreement of 98.5% with the manual assessments made by board-certified pathologists. Besides, AQuA achieves super-human performance in identifying realistic-looking, virtually stained hallucinatory images that would normally mislead human diagnosticians by deceiving them into diagnosing patients that never existed. We further demonstrate the wide adaptability of AQuA across various virtually and histochemically stained tissue images and showcase its strong external generalization to detect unseen hallucination patterns of virtual staining network models as well as artifacts observed in the traditional histochemical staining workflow. This framework creates new opportunities to enhance the reliability of virtual staining and will provide quality assurance for various image generation and transformation tasks in digital pathology and computational imaging.